A young man seen strapped to a ‘restraint chair’ with a hood over his face inside the now infamous Don Dale Youth Detention Centre has taken defamation action against a host of media companies for comments posted by users on their respective ‘branded’ Facebook pages.

The young man, Dylan Voller, was thrust into the public’s glare in 2016 after being featured in the ABC Four Corners episode ‘Australia’s Shame’ which exposed degrading and inhumane conditions faced by young people inside the Northern Territory youth detention facility.

The ABC’s shocking exposé quickly led to the announcement of a Royal Commission and Voller, who gave graphic evidence of his treatment during its hearings, reached a confidential settlement with the Federal Government.

Writing in the Australian regarding Voller’s defamation claim, journalist Dana McCauley labelled the case ‘ground breaking’, stating that it could ‘transform’ the way media companies operate on social media.

I agree with the first claim and doubt the second for reasons I’ll detail at a later date.

Perhaps forgetting for a minute that as a society we tend not to judge the actions of minors as being determinative of who they may grow to become as fully formed adults – McCauley goes on to lay into Voller, talking up a so-called ‘litany of public information about Voller’s violent and anti-social behaviour’.

No doubt News Limited and co will gleefully try to deploy this ‘information’ to their forensic advantage.

That aside, what’s interesting about the Voller case isn’t really the young man himself.

As noted by McCauley in the Australian, ‘the court will be asked to consider whether publishers can be liable for comments made on their Facebook pages…’. This is hugely important. And not just for the news media.

Interestingly, McCauley’s full sentence reads, ‘the court will be asked to consider whether publishers can be liable for comments made on their Facebook pages before they have been alerted to their allegedly damaging content ’.

‘…before they have been alerted to their allegedly damaging content…’

Ummm… What?

The second part of the sentence is an odd point to make. The reason is technical and because it demonstrates astounding ignorance regarding how Facebook actually works.

The broad issues at the heart of the case stem from the use of Faccebook by large and well-resourced media organisations and the danger they face (at least in a legal sense) as they dive head first into the social media battlespace.

I’m going to try and cover some of these issues in a series of posts. This first post is simply an introduction to context and a bit of a long rant. I’ll get into a more detailed legal/technical analysis in later posts and discuss potential implications.

Kindly, I’ve been provided access to material that is going before the Court. While I don’t intend to publish it here, I hope to be able to offer some insights into just what’s at stake.

A market of diminishing returns

In a market of diminishing returns, where the rivers of gold that once flowed freely in the form of classified advertising have long since dried up, news organisations learned a thing or two from recent history.

Unlike the late 1990’s and early 2000’s when emerging forms of internet commerce cut gaping holes in their traditional business model, large media organisations haven’t dilly-dallied when it comes to Facebook.

Facebook isn’t just big for news media. It’s more than that. Much more.

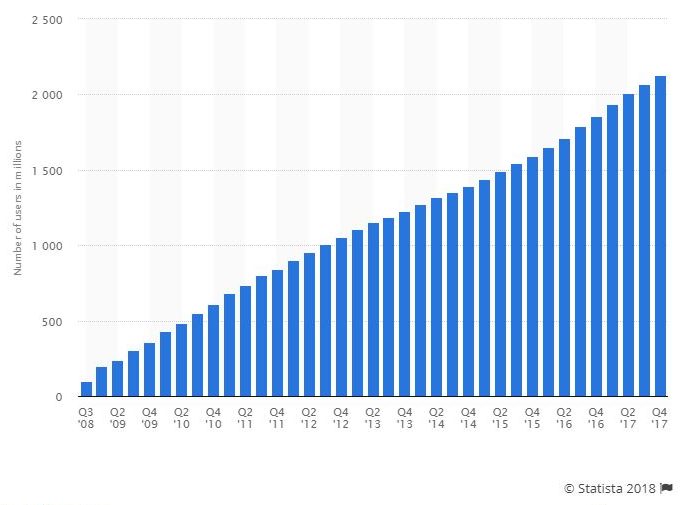

At the time of writing, Facebook has approximately 2.2 billion monthly active users. If you include just one of its other satellite companies, ‘Instagram’ – that figure comes in at around 3 billion.

To put that number in perspective, the total user base of Facebook & co exceeds the combined populations of India and China by 200 million.

If recent predictions are to be believed about the ‘realistic possibility’ of Facebook’s 2018 re-entry into the China market – its current growth trajectory reveals globally 1 in 2 people could soon connect to the platform.

Facebook is an unstoppable digital tsunami.

Only Google’s Youtube comes close, coming in at 1.5 Billion monthly active users. But again, like another of Facebook’s rivals Twitter, Youtube has limited social functionality and purpose. What Youtube has was matched by Facebook long ago when they introduced the ability to upload and share video content.

Functional limitations on alternative platforms leave Facebook unrivalled in its ability to quickly roll out new concepts and rapidly respond to rivals. The result provides Facebook flexibility to squash competition.

For its part, Twitter, which is viewed as Facebook’s biggest (perhaps incorrectly) historical competitor, is also completely averse to radical innovation.

Twitter has tried – through small functional changes such as doubling its character limit to give regular users and trolls more space to endlessly fill the world with inane commentary. Its CEO laughably talked up the change as a ‘big move’.

In reality this minuscule change was largely driven by investor concerns regarding the stalled growth of its 330 million monthly active users (6 & ½ times less than Facebook) which flatlined in 2017.

News Dominance

In Australia over 40% of people regularly get their news from Facebook.

Australia isn’t alone. Facebook dominates news shared on social media globally, including the United States.

This rapid change in news consumption established a non-consensual symbiotic relationship between Facebook and media organisations.

I use the term non-consensual because what media organisation, large or small, wanting to appear relevant or drive revenue (clicks) would choose to opt out?

Can anyone realistically imagine Rupert Murdoch’s News Limited publications, The Australian or Herald Sun, ceding 40% of the online news market to Fairfax Media’s The Age or Sydney Morning Herald?

Facebook isn’t optional. Rather, it has evolved over time to become an indispensable tool used by media organisations to generate income through directed traffic and, yes, in some cases probably survive.

A recent article from the New York Times. written after Facebook announced changes to its ‘news feed’ (almost) hit the nail on the head.

‘Facebook became a news powerhouse with reluctance, and journalism executives allied themselves with it mostly out of necessity…’

The ability to ‘like’ a news organisations page and the use of embedded tracking tools allows Facebook marketing the ability to sell those same organisations a raft of products to direct crafted content directly to a user’s Facebook page.

Facebook even have their own ‘hub’ called the ‘Journalism Project’ to instruct the news media on issues regarding interoperability.

All your data belong to us

Highly detailed demographic data and other information can be readily extrapolated from Facebook. In turn, this data enables media organisations to serve up articles based on a user’s interests, measure performance and generate targeted advertising to drive products or sell subscriptions.

Facebook data also provides detailed insights, allowing participating media organisations to craft content based on how responsive subject matter is to their respective target audiences. E.g, to know what sells, and what doesn’t.

In this sense, Facebook is changing “News” and the way people interact online in ways that those who set it up have come to regret.

Facebook’s former Vice President for user growth Chamath Palihapitiya described the ‘Facebook effect’ in the following terms;

It(s) literally is a point now where I think we have created tools that are ripping apart the social fabric of how society works. That is truly where we are…

He also described the very model which benefits both Facebook and at least indirectly, media organisations as;

…short-term, dopamine-driven feedback loops that…are destroying how society works: no civil discourse, no cooperation, misinformation, mistruth. And it’s not an American problem. This is not about Russian ads. This is a global problem.

The former Facebook V.P revealed in blunt terms that people should take a “hard break from some of these tools and the things that you rely on.”, telling the audience he doesn’t allow his children to ‘use this shit”. Ouch…!

Cementing control of news

In the wake of the Cambridge Analytica scandal, a company that sneakily harvested 87 million Facebook profiles, Facebook suddenly announced changes to their news feed in response to the barrage of criticism the company was receiving from the spread of “fake news” across the platform.

So reliant on this model – even the mere suggestion of any change on Facebook’s part had some media organisations freaking out about the impact it could have on their bottom line.

Prior to the official announcement – Facebook flagged its planned transition in an interview with the New York Times. The article clearly sent shockwaves through newsrooms across the globe.

Writing for the New York Times the following week, in an article titled ‘The End of the Social News Era? Journalists Brace for Facebook’s Big Change’ – Journalist’s Sapna Maheswhari and Sydney Emeber did a round-up of the news media’s anxiousness at what lay ahead.

“Nobody knows exactly what impact it’ll have, but in a lot of ways, it looks like the end of the social news era,” Jacob Weisberg, the chairman and editor in chief of the Slate Group, said on Friday. “Everybody’s Facebook traffic has been declining all year, so they’ve been de-emphasizing news. But for them to make such a fundamental change in the platform — I don’t think people were really anticipating it.”

And, to illustrate just how reliant news media is on Facebook in terms of revenue.

“Changing the terms rapidly is really bringing into focus just how powerful the platforms have become and how the infrastructure is a very difficult place for publishers to operate and navigate,” John Ridding, the chief executive of The Financial Times, said. “That has big implications for how people receive news, where they find it and what the quality of their news is.”

Far from being the end of the ‘Social News Era’ for mainstream news outlets, the Facebook changes provide a positive benefit to those same media executives who two months earlier went into meltdown.

Facebook announced the changes at an event they titled ‘Fighting Abuse @ Scale’

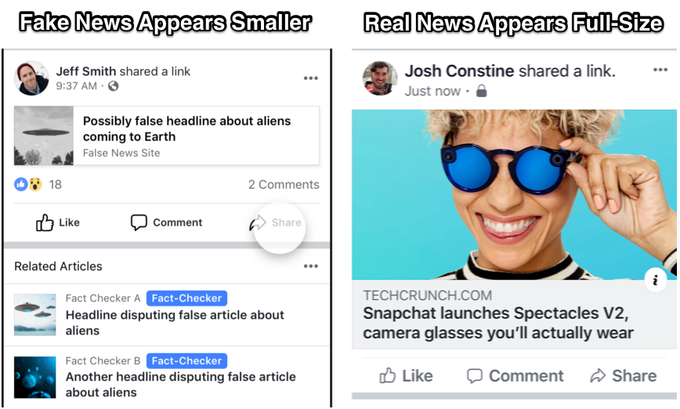

As noted by Tech Crunch, Facebook changes basically boil down to using a couple of methods to reduce the impact of ‘unverified’ sources.

1 – Reducing the visibility of ‘unverified’ fake news. So in essence, if you’re the New York Times you’ll be preferenced over some no-name website set up by Russian Intelligence a week prior to an election.

2 – Using machine learning – and human fact checkers. This is done through what Facebook calls its ‘eco-system’ approach and includes;

- Account Creation – If accounts are created using fake identities or networks of bad actors, they’re removed.

- Asset Creation – Facebook looks for similarities to shut down clusters of fraudulently created Pages and inhibit the domains they’re connected to.

- Ad Policies – Malicious Pages and domains that exhibit signatures of wrong use lose the ability to buy or host ads, which deters them from growing their audience or monetizing it.

- False Content Creation – Facebook applies machine learning to text and images to find patterns that indicate risk.

- Distribution – To limit the spread of false news, Facebook works with fact-checkers. If they debunk an article, its size shrinks, Related Articles are appended and Facebook downranks the stories in News Feed.

So, for all the talk about the dangers of the Cambridge Analytica scandal and their so-called use of ‘psychographic microtargeting‘, the real issue for Facebook is not so much how the stolen data was used – the real issue was another company undercutting Facebook’s already established business model.

In 2012 Facebook was already running experiments on its platform where it manipulated nearly 700,000 users’ news feeds to see whether it would affect their emotions. The study, published in 2014, was roundly criticised for beaching ethical guidelines.

In Australia, Facebook was also exposed offering up eerily similar kinds of psychographic targeting to advertisers.

As detailed in a leaked internal Facebook report to the Australian, this included the capacity to identify when teenagers feel “insecure”, “worthless” and “need a confidence boost”.

Far from being old Data, as was the case with Cambridge Analytica, the leaked report boasted how Facebook could also,

‘monitor posts and photos in real time to determine when young people feel “stressed”, “defeated”, “overwhelmed”, “anxious”, “nervous”, “stupid”, “silly”, “useless” and a “failure”.

Written for one of the nation’s top banks, the report also claimed it had a database of 1.9 million high schoolers, 1.5 million tertiary students and 3 million young workers.

How does all this relate to the Voller Case?

I think the point was best expressed by the New York Times when it wrote;

Over the years, as Facebook and media companies entangled themselves with each other, users’ feeds that had once been filled with chatter about graduations, changing relationship statuses and other subjects belonging to the private sphere morphed into digital spaces rife with public matters — news! — and the endless and endlessly contentious comment threads that went with them.

The relationship to the Voller case is this: News organisations who have willingly ‘entangled themselves’ with Facebook derive a transactional benefit from those same users actively engaging with their content.

This entanglement comes at a cost. It also comes with a responsibility to understand how Facebook works and use the tools available to ensure you don’t fall foul of the law.

Setting to one side the comments relating to Voller, I’ve seen female journalist’s threatened with rape, death threats, and extreme forms of hate speech openly spouted in comments on Facebook posts published by the same media organisations who are defendant’s in Voller’s case.

Cases like this are a wake-up call.

Leave a Reply